Where criminology meets big data business analytics

Share

Marketing speaks to the Aussie data analytics company that’s cracking cause-and-effect modelling with the mathematics behind econometric modelling, medical diagnosis and police investigation.

Of the countless companies springing up in the data analytics space, one of the more interesting is an Australian company founded by Nicholas Davy, an ex-agency boss, and John Galloway, an analytics software developer with a knack for software that makes previously-hidden insights visual.

Brand Communities’ premise is that the well-known ‘correlation doesn’t equal causation’ argument, while true, is limiting. Yes, it is impossible to absolutely prove causation, but advances in statistical methods enable probabilities closer and closer to 99%, depending on the data.

We don’t need to go into the details here, thankfully, but what’s called ‘contributory causality mathematics’ has become commonplace in medical diagnosis, police investigations and econometric analyses to assess cause and effect probabilities with the highest levels of accuracy.

Professor Galloway is the brains behind the operation, while Davies is the business chops. Galloway previously developed analytics software used by top national security agencies such as the FBI, MI5 and Mossad.

His software has been used to identify major criminals such as Ivan Milat and the Bali Bombers. It has also been used by governments to fight money laundering and detect fraud, and by much of the insurance industry in North America.

Brand Communities’ primary commercial product is Centrifuge42, a cause-and-effect engine that the company says has broken the code to identifying exactly what factors, from the weather to consumer confidence to the competition’s pricing, influences a nominated variable, and by how much.

Trial clients have included Telstra, Toyota, Commonwealth Bank, Nestle, Subaru, Kellogg and Qantas, and the company says it’s in early stages with several clients in the US.

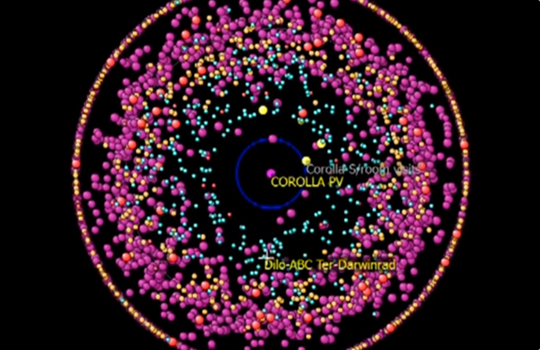

Put simply, Centrifuge42 takes data inputs from a variety of sources and visually shows which of them affects a nominated variable. If you look at the image at the top of this page, thousands of data points represent factors influencing the central question, with the points closest to the centre impacting most powerfully. The weakest factors are spun out to the edges (hence the ‘centrifuge’ name).

For enterprise, there are also tools for segmentation and forecasting, and the company is also providing a leaner, online DIY platform for industry verticals and SMEs, as well as Statzball, a sports predictor and gaming platform.

Davies, who spent decades in marketing and advertising, including as part-owner of Publicis Mojo, is CEO at Brand Communities. He says the company is positioning around the idea of putting big data analytics back in the hands of decision-makers, rather than having to being outsourced to analysts. “We’re basically taking seriously grunt mathematics and putting it in the hands of decision-makers who can use this themselves,” he says.

As with any analysis, the output is only as good as the input. But there are a lot of possible inputs.

“We’ve obviously got very specific client data, which I can’t share, and we’ve got a whole lot of our external data that we can throw at it,” Davies says. “We get global scrapes for every country in the world every hour, for example, of economic, financial, weather, political, agricultural, Bureau of Statistics, unemployment and consumer price index.

“So if it’s having an impact on NPS [or whatever the question is] you’ll see which of these actually has an impact. The weather, rainy days, hot days, cold days, by state, etcetera… We’ve got all that already in our pot. We can just apply that to anything that’s more granular that the client gives us. That’s our pot of data.”

Additionally, the company licenses from contributors, such as Roy Morgan, Experian and Nielsen, and it develops its own proprietary research.

Originally designed as a very specific media ROI tool, marketing directors, brand managers and media agencies are able to look at what’s’ working and not working in campaigns or promotions.

Pilot programs with clients mentioned at the top of this article have revealed the extent to which its power can apply.

But Centrifuge42’s bigger powers became apparent during pilot programs with a couple of the clients mentioned at the top of this article. “Later clients said, ‘Why don’t we just add all of this other data in to see the impact of price on my marketing activity, or the impact of my competitor’s price on my activity?’

“They actually helped us reframe the position of our company because it was originally intended as just a media ROI tool and then it turned into a marketing ROI tool. Now it’s a business ROI tool. It just kept growing in scale.”

The appeal of being able to throw a million data-points into a machine and in a matter of seconds get a very clear picture of what’s affecting major business indicators is clear. Filtering out the factors that most strongly impact net promoter score, for example, suggests a roadmap for prioritising initiatives. “If net promoter score is my objective, these are the five things that are having a positive impact and these are the five things that are having a negative impact on net promoter score. If I want to change my net promoter score, do more of the stuff in green and do less of the stuff in red,” Davies explains.

“Then, if I then wanted to forecast that, I say, ‘Well what if I halve my price and open six more stores? What if my competitors launch a new two-for-one offer?’ You can just play what if scenario forecasting until your eyes pop out. It gives you the priority of what’s important and then enables you to then forecast against what are some of those things that are the most important to change in the future, what’s going to happen?”

The level of accuracy of the forecasting depends on the quality of the data that’s fed into the software. “That’s the most critical component. The more granular the data, the more accurate the predictions,” Davies says.

“For example, we’ve got a guy using this for the share market now, and he’s a using data current to fractions of a second. His accuracy is amazing, right up to 98, 97% accuracy. If someone only uses say monthly data, then obviously the accuracy levels drop considerably. Its’ a matter of how many data points they provide.

“On the assumption a client gives us a lot of good granular data, we’re into the mid to high 90s. If they give us crap data, well it’s going to be in the 30s and 40s.”

Davies says most clients have daily sales data, which can then be compared to media, which is monthly. By extrapolating, “They’re able to look what types of promotions, side stacks, price elasticity, advertising, are you in a catalogue, not in a catalogue, winter, hot days versus rainy days versus whatever. Then you can go, ‘Right, the ultimate model moving forward is X, Y and Z.'”

At this point you might be wondering how much the privilege of accessing this software will cost. Davies says an enterprise considering a one-off pilot is looking at a base cost of $100,000 and up depedning on how many central questions a client is interrogating, plus hourly fees for data input and cleaning, if needed, and additional costs for the data licensed from third-party vendors and Brand Communities’ own data offerings.

Perhaps controversially, Brand Communities is also floating the idea of a data co-op, or library, an open market reselling platform for clients to recoup money from their ageing quantitative research by sharing it with other companies (anonymously, of course).